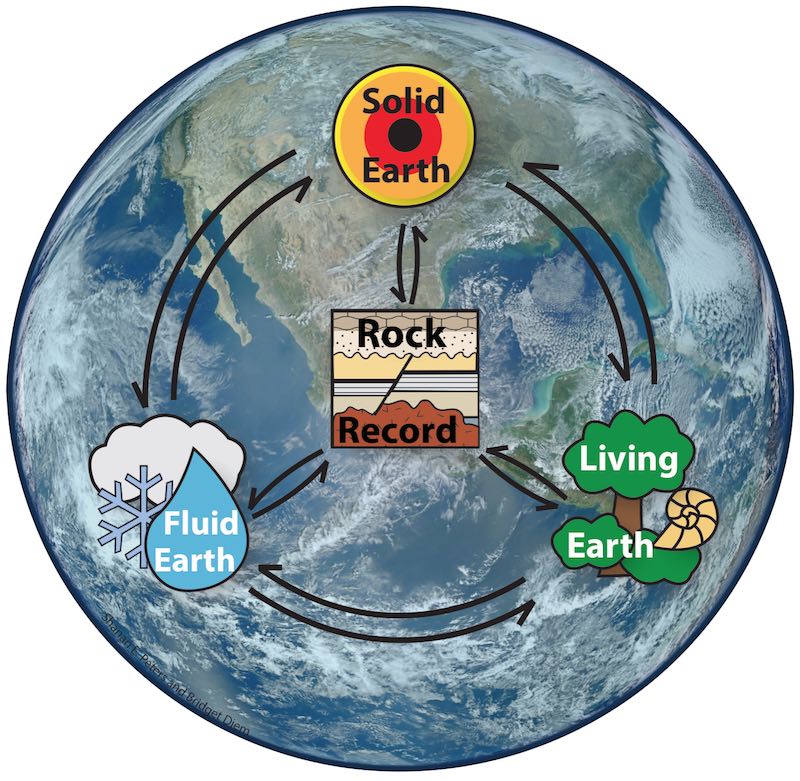

Macrostrat

Comprehensive database for quantitative stratigraphy and a platform for discovering and organizing geological data.

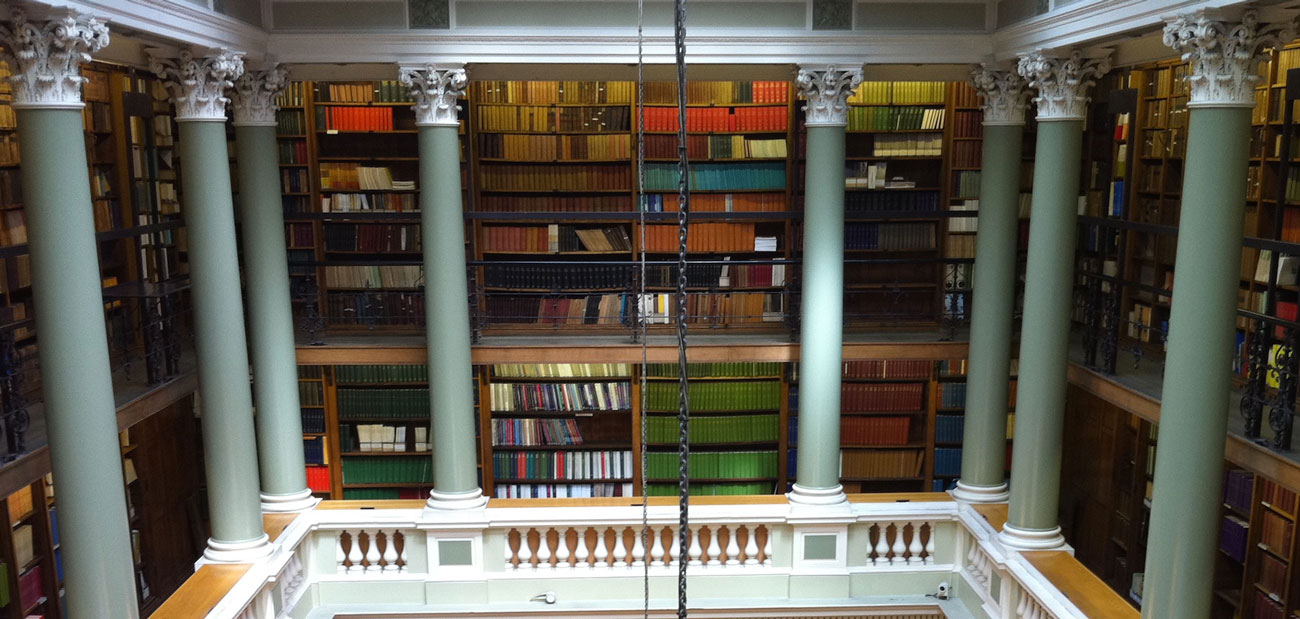

xDD

Formerly known as GeoDeepDive: a machine reading and learning system, built upon a new kind of digital library resource.

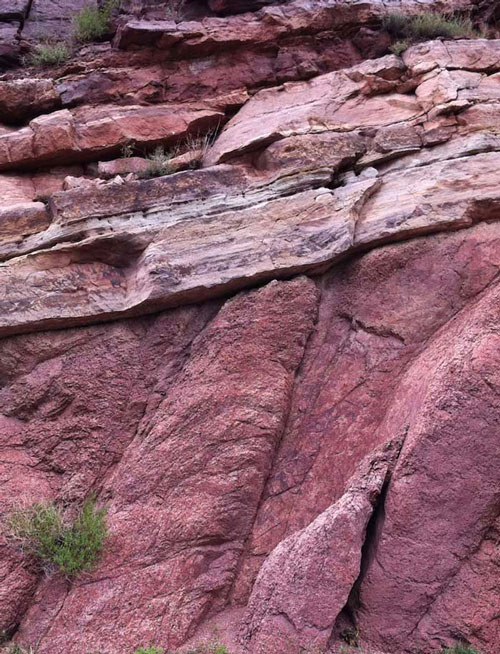

Field projects

From the Great Unconformity to stratigraphic paleobiology, ongoing projects get us out into the field.